Thinking in Systems

This is one of the best books I read in 2021. I have highlights in most pages, which makes this summary long. Highly recommended for any designer or problem solver.

This book is an introduction about thinking in systems.

Why thinking in systems in important

- When facing a problem, we're often too focused on it's surface: visible symptoms, events of the day, notable effects, or influential people.

- We've also been educated by reductive science to analyze (decompose) problems and look at the isolated parts, and not seeing systems as a whole.

- It's easy to point at these surface-level components and attribute any blame to them, when in fact the problems are deeper, or systemic.

- Systems thinking helps us take a step back and see problems in the underlying structure that are leading to the behaviors we're observing. It helps us find the true leverage points to affect change.

What is a system?

-

A set of things interconnected in some way that leads to its own behavior.

- A pile of sand is not a system, because taking away or adding more sand doesn't change the nature of the thing.

- A soccer team is a system, because taking away funding, players, fans, brand, etc all lead to changes in what it is.

-

Multiple things can affect the system from the outside, but the way the system responds to these pressures is a characteristic of itself.

-

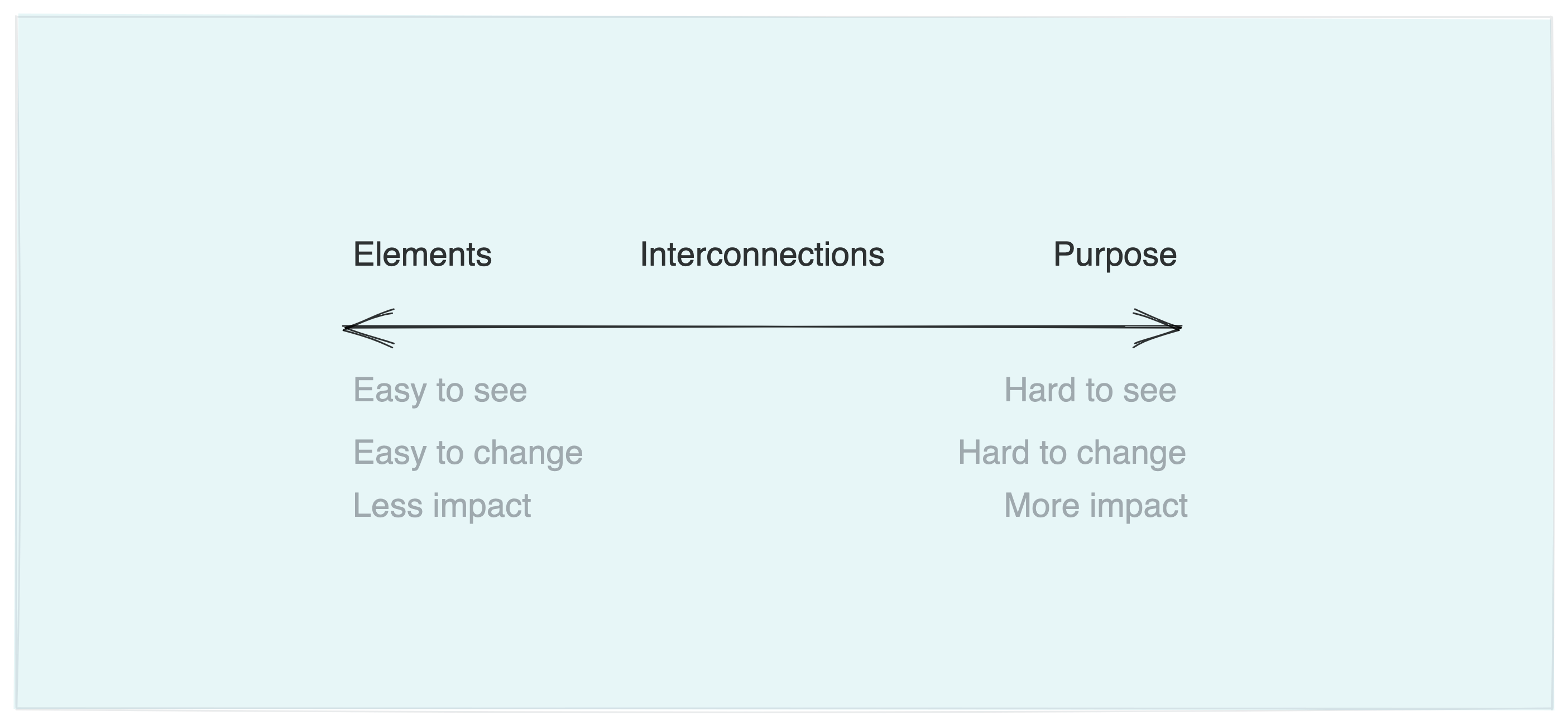

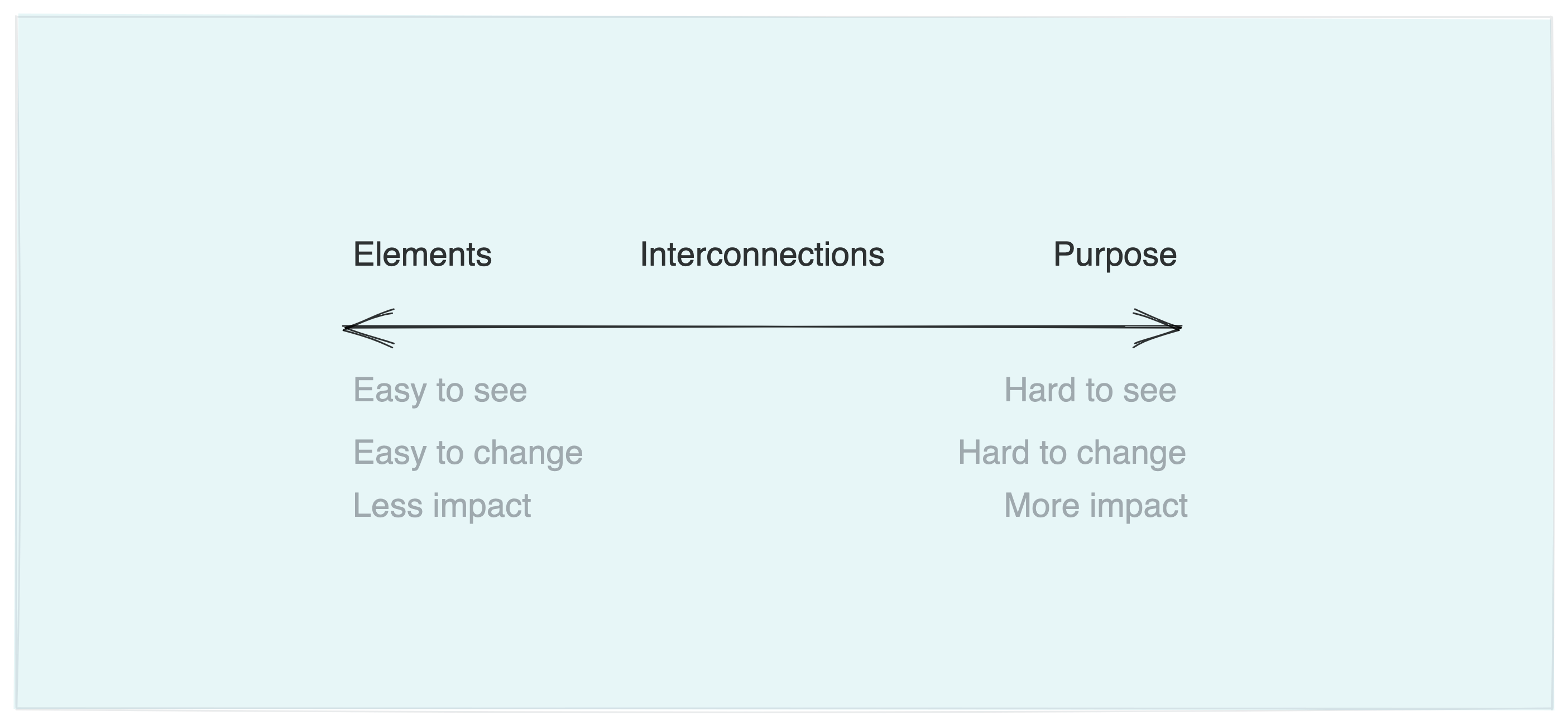

A system must consist of 3 different things:

- Elements

- Usually the easiest part to notice.

- The list of elements on a system could be endless.

- Interconnections

- What are the rules governing the interactions?

- Do the parts affect each other?

- Do the parts together produce a different effect than in isolation?

- Function of purpose

- Is the result of the many sub-purposes embedded in each element.

- The best way to figure out a system's purpose is to watch how it behaves and the results it produces. "What a thing is for is what the thing does.

- Changing the players on a team (elements) may reduce performance, but doesn't change the goals or rules of the game.

- Changing a leader (CEO, president, etc) is only impactful if that leader is determined to changing the rules or the purpose of the system. Otherwise you're just changing an element.

To understand a system, one must not only understand the parts, but how they relate to each other:

You think that because you understand "one" that you must understand "two" because one and one make two. But you forget that you must also understand "and". — Sufi teaching story

Understanding system behavior over time

The book introduces a handy diagram for representing systems. It consists of stocks, flows, faucets, and clouds.

Stocks and flows

- The human mind seems more easily focus on stocks than on flows. We're usually more attracted to counting "how much there is" than "how much is coming in/out".

- We also focus more on inflows than on outflows. Therefore, sometimes we miss that in order to increase a stock, we can increase inflows but also decrease outflows.

- Stocks don't respond immediately to change in flows.

- They can take time to change, causing delays.

- It's easy to forget about these delays and how fast they can compound.

- If you're not aware about these delays, you may give up on change too soon. Things that took decades to accumulate will probably take decades to get rid of.

- Minor changes to how delays interact may cause big effects on how the system oscillates.

- This also creates stability. Stocks allow time for maneuvers and changes.

- It also allows for inflows and outflows to operate independently of each other.

Feedback processes

- Change in stock sends a feedback that adjusts flows.

- Balancing feedback

- These operate in order to keep the system stocks at a determined goal. If there's to much, they increase the outflows. If there's too little, they increase the inflows.

- Eg. Thermostat

- Reinforcing feedback

- Loops that increase when stocks increase, leading to exponential growth.

- Any system with a reinforcing feedback will, at some point, face a balancing feedback that will constrain its growth.

- Information delivered by feedback can only affect future behavior. Trying to fix current behavior based on past feedback causes you to always be one step too late.

- This is one reason why economic models fail: they assume people will react instantly to the raise in prices. But things take time.

- It's also why hiring has to always be quicker than attrition. You should be hiring not only as a response to people who already left, but also those who may leave soon.

Characteristics of well-working systems

- Resilience

- Resilience is the ability to bounce back into shape after pressure has been applied. It's opposite is brittleness or rigidity.

- Resilience is not the same as being static. Many resilient systems are dynamic and flexibility in shape is what makes them resilient.

- Resilience is harder to measure than stability, so people often make the mistake of prioritizing the latter, for productivity, or another easily quantifiable metric.

- Self-organization

- The ability to make it's organization more complex over time.

- It produces heterogeneity and unpredictability.

- Requires freedom, experimentation, and disorder.

- It can be scary for individuals in position of power.

- Hierarchy

- Complex systems can only evolve if there are simpler sub-systems that compose it. This is what naturally creates hierarchy.

- They reduce the amount of information that is necessary in any given part of the system.

- There must be a balance between centralized control and autonomy for most systems to perform.

- If a cell breaks free, if a player gives up on the rules, if a teammate takes too much time off, the system under-performs.

- If there's too much central control, subsystems can choke or die; there's no freedom to experiment and create resilience for the long-term.

Why systems surprise us

- We can't know how the world is objectively, we can only create models. Our models of the world are good enough (they're essential to our survival), but they're always partial.

- We forget that flows can be independent from each other. Flows don't affect flows. Only stocks.

- We're too fascinated by the events, and forget to look at the underlying structure.

- We are very bad at thinking non-linearly, and systems are very rarely linear.

- All the boundaries we draw are imaginary: in reality, all systems are interconnected. We need to focus our scope when thinking about them, and may forget they're artificial. (the clouds in the diagrams are always dangerous: in reality they're also stocks, which are part of the system!)

- One of the most important things when thinking in systems is reconsidering the boundaries. They may reveal new ways at looking at the problem.

- Seeing the system from the inside blinds us from the big picture. Fisherman fish too much, managers hate unions, investors make blunders all the time. They all have imperfect knowledge of the system, and their actions at the moment feel perfectly rational. Blaming individuals is rarely productive in creating a better outcome.

System traps

-

Policy resistance

- In some systems all actors are pushing in different directions. Any policy to push it towards one side will activate feedback loops to push it to the opposite side.

- One way to deal with this is to overpower it, and push hard enough to get to the desired effect. But this is very risky: the stronger the push, the stronger the balancing feedback.

- Another solution is to just let go. Save the effort and focus on other areas of the problem.

- The most effective solution is to find an overarching goal that all actors can agree on.

-

Tragedy of the commons

- When a shared resource is eroded by use, and when the erosion doesn't send any feedback to the actors using it.

- Three ways to avoid it:

- Education and urge: appeal to morality and threaten transgressors with social disapproval.

- Privatize the commons or add feedback loops: make each actor feel the pain of their piece of stock being eroded.

- Regulate the commons: quotas, bans, permits, taxes, incentives.

-

Drift to low performance

- When people's perception of the system's state is biases towards bad news or events, and their expectations of performance are lowered.

- How to avoid it:

- Keep performance standards absolute

- Make high performance more visible than bad performance.

-

Escalation

- When increase in one stock leads to increase in another stock, and so on. (eg. lifestyle inflation)

- How to avoid it:

- Avoid getting in the cycle completely

- Refuse to compete (eg. quit instagram!)

-

Success to the successful

- When the rewards of winning make more likely that you'll win again.

- How to avoid it:

- Diversify and innovate, so you can compete in novel ways.

- Antitrust loops or other limits to monopolies.

- Leveling out the playing field periodically, so everyone has an equal shot.

-

Shifting the burden to the intervenor (addiction)

- When an actor becomes dependent on a policy to solve a symptom of a problem, without solving the underlying issue.

- How to avoid it:

- Strengthen the system's ability to shoulder it's own burdens.

- Think long term, not short term solutions.

-

Rule beating

- When actors technically. perversely obey the rules but not for the rules' original goals.

- Eg. departments spending their entire budget not because they have relevant projects, but in order to secure the same funding next year.

- The way out:

- Redesign the rules to focus creative energy in achieving the goal, not satisfying the rule.

-

Seeking the wrong goal

The gross national product does not allow for the health of our children, the quality of their education, or the joy of their play. It does not include the beauty of our poetry or the strength of our marriages, the intelligence of our public debate or the integrity of our public officials. It measures neither our wit nor our courage, neither our wisdom not our learning, neither our compassion nor our devotion to our country, it measures everything in short, except that which makes life worthwhile [...] it is not the record of our life at home but the fever chart of our consumption.

- Systems will produce whatever goal they're designed to produce.

- The way out:

- Design rules carefully to reflect the welfare of the system.

- Do not measure effort, measure results.

Leverage points: how to affect change

In order of impact, here are the leverage points for systemic change:

- Transcend paradigms

- All our paradigms are human creations. We can detach ourselves from them, realizing that no one paradigm is "true".

- Letting go of preconceived notions, creating opportunity for new interpretations and models.

- Paradigms

- Our collective set of beliefs about how the world works. They're the origins of our goals and therefore of the systems we create.

- To achieve change:

- Cultivate new ways of seeing.

- Keep critiquing and pointing our failures in the current paradigms.

- Don't waste time with reactionaries. Work with the vast people in the middle who are open minded.

- Goals / Purpose

- Leaders have a huge leverage if they're willing to change a system's goals.

- Self-organization

- Cultivate diversity, variability, experimentation, chaos. Requires letting go of control.

- Rules and incentives

- What if students graded teachers? What if politicians had to use public services only? etc.

- Information flows

- Missing information flows is one of the most common systemic problems.

- Information needs to be delivered in the right place and format: it's not enough to let everyone know how bad something is, if they don't have individual stake. It's not enough to give transparent feedback, if it's going to be read in a biased way.

- Reinforcing feedback loops

- Reducing growth, not accelerating it, usually leads to more sustainable systems.

- Balancing feedback loops

- If there's no transparency in feedback, or if its too delayed, loops may create unfortunate effects.

- Feedback may not be in adequate proportion to the effect it's trying to contain. This can get out of balance with introduction of new technologies, incentives, etc, and should be reviewed often.

- Delays

- Systems can't change rapidly if the delays are large.

- It's very hard to change delay time, so it's usually easier to just slow down the whole system, so it can react to the feedback in a more cohesive manner.

- Stock-flow structures

- Rebuild the system. Usually very expensive and slow process.

- The best leverage here is to build the system properly in the first place.

- After it's built, efforts should be focused on understanding the bottlenecks and limitations and using it to maximum efficiency.

- Buffers

- Changing the size of a buffer can lead to increase in resiliency, or speed of feedback.

- Numbers, constants, and parameters

- People focus a lot on fine tuning percentages, increasing goals, when these rarely have important effect on systemic changes.

- Turning the "faucets" may change rates at which things flow, but doens't necessarily affect the end behavior like we expect.

Living in a world of systems

- Systems are unpredictable, and our reductionist science does not help us control them.

- How to live with the systems (a series of final tips):

- Observe before you act. See how a system behaves.

- Expose your ideas and diagrams to other people, write them down with rigor, put them to test. Explore multiple paths, and don't get attached to one single idea.

- Avoid biased, late, or missing information.

- Avoid trusting only the numbers: no one can quantify justice, love, democracy, freedom, truth. But if nobody is speaking out for them, they'll cease to exist.

- Create meta-feedback loops. So that feedback loops are adjusted from time to time.

- Design for intrinsic responsibility, so that actors are accountable for their actions.

- Celebrate complexity

- Think long term.